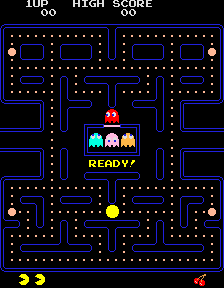

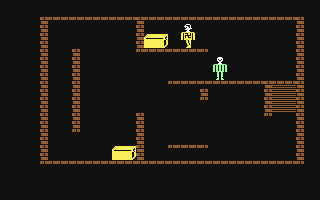

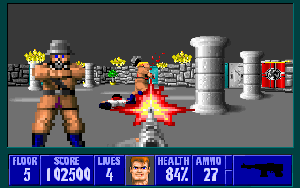

Games evolved with:

Factors:

Software trick example: 'fast inverse square root'

float rsqrt(float x)

{

float h = x/2;

int i = *(int*)&x;

i = 0x5f3759df - (i>>1);

x = *(float*)&i;

return x*(1.5f - h*x*x); /* newton */

}

Hardware factors:

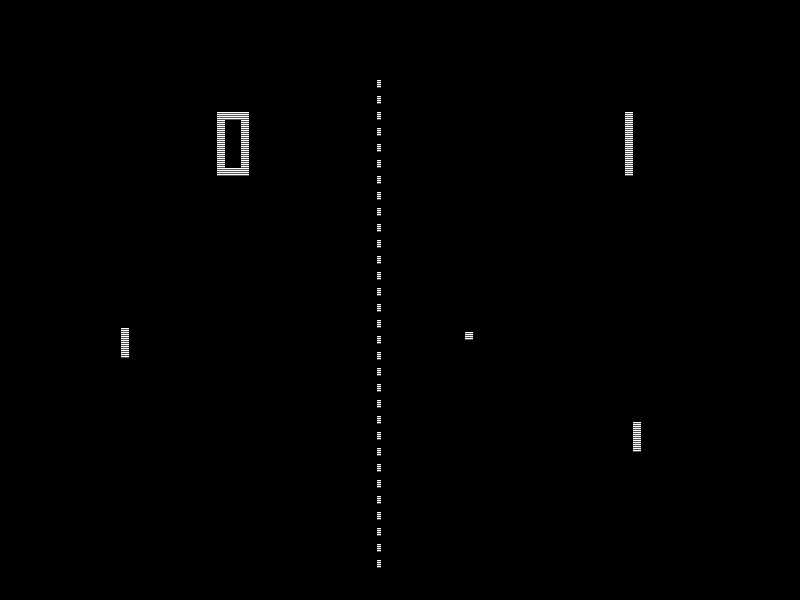

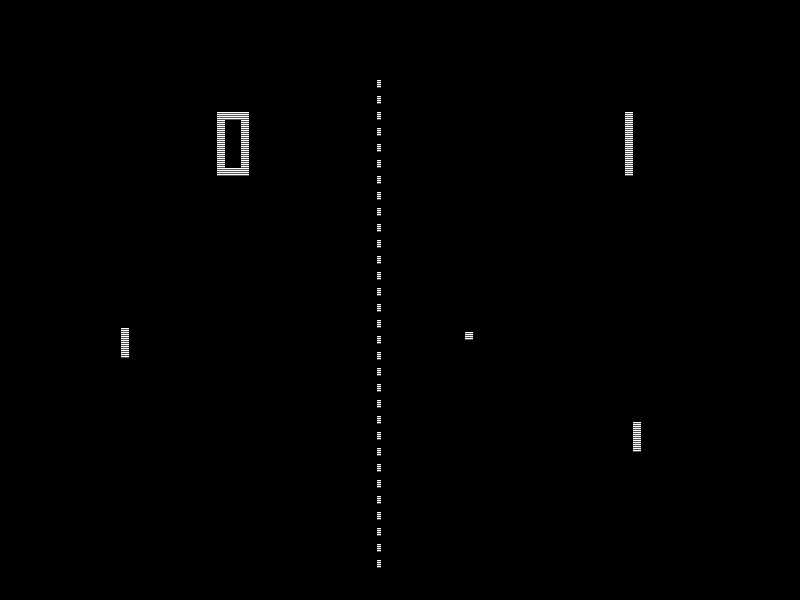

CGA (Color Graphics Adapter), 1981:

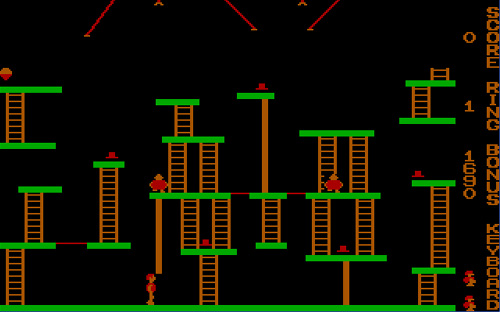

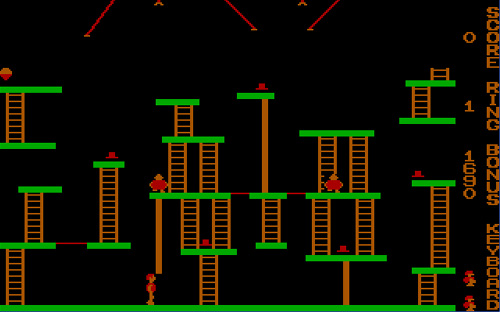

EGA (Enhanced Graphics Adapter), 1984:

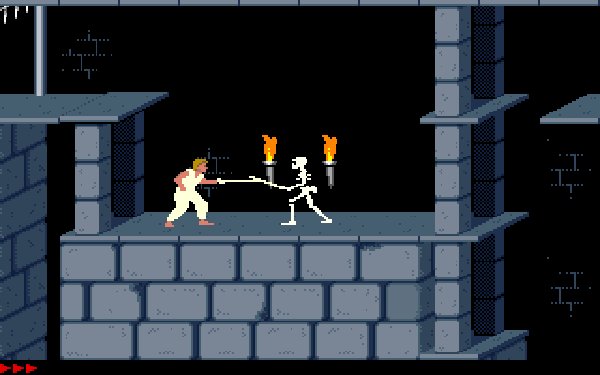

VGA (Video Graphics Array), 1987:

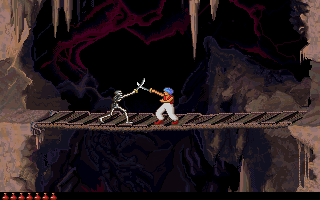

IBM 8514, 1987:

IBM XGA, 1990:

Mid-90's: Graphical User Interfaces (Windows, basically) become commonplace on consumer PCs. They benefit from hardware-accelerated 2D graphics. Leading companies: S3, Matrox, ATI.

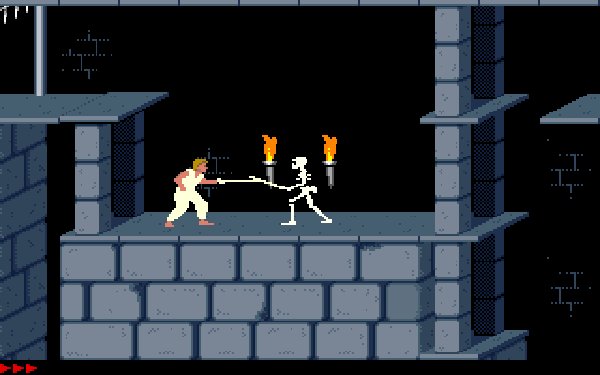

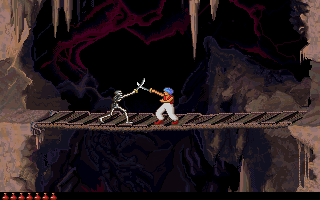

Games and gamers wanted 3D graphics!

Auxiliary cards are born to handle scene rendering. Primary example:

3Dfx Voodoo. Programs must be written specifically to use it, but

the quality justifies the effort (commercially, too).

Auxiliary cards are born to handle scene rendering. Primary example:

3Dfx Voodoo. Programs must be written specifically to use it, but

the quality justifies the effort (commercially, too).

1990s. State of the art for professional (not consumer) 3D

graphics: SGI, with hardware programmable using a specific API called

IrisGL.

1990s. State of the art for professional (not consumer) 3D

graphics: SGI, with hardware programmable using a specific API called

IrisGL.

Competition from Sun, HP, IBM leads to an open subset: OpenGL.

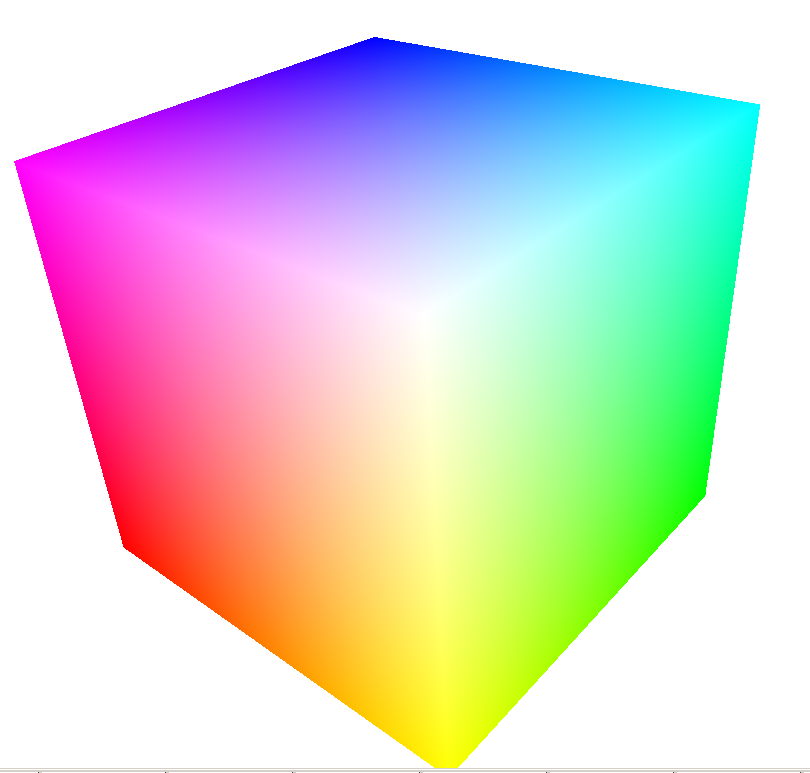

OpenGL defines a set of (standard) functions to describe 2D and 3D 'scenes'. The API can be used to draw points, lines, polygons, set the viewer and light sources position, define colouring and textures.

OpenGL: cross-platform specification. DirectX/Direct3D: Microsoft Windows proprietary specification, specific for games. Advanced features include vertex and fragment shading: manipulate single 3D vertices or 2D pixels. These features are later included in OpenGL 2.0 too.

Video cards can support subsets of these APIs in hardware, the rest is software-emulated (and runs on the CPU).

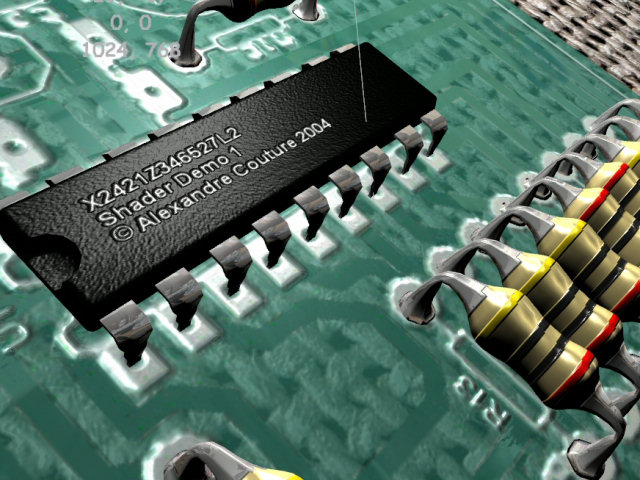

Hardware support for 3D graphics acceleration leads to GPU (Graphic Processing Unit): computing processors that take care of the complex rendering operation, while the CPU takes care of AI, player control, physics engine, etc.